Esteban and Ben have been working to get ZigFu+Kinect+Unity to “see” six players, and to be able to measure changes in the width, height, and depth dimensions of each player. This to be able to interpret gestures for each of the players. The challenge is that most gestural interpreters rely on skeleton detection and the ZigFu+Kinect combination can only detect two skeletons. This forces us to attempt lower level (closer to the code) types of solutions. We desire to maximize the number of players detectable by each sensor. Ben and Esteban are learning C++ by hacking their way through the scripts provided by ZigFu, and modifying them.

Andre has been trying to explore the same problem as Esteban and Ben from a different direction, exploring Open Sound Control (OSC) and the Open Toolkit for Sensing People in Spaces (Open TSPS) as the interfaces with Unity. This approach may enable us to have the option of commodity web-cameras as the sensors. OSC has been used by many other artists and designers as a kind of “universal translator” or middleware or bridge technology to convey a string of numerical data from one autonomous application to another. This approach also has the benefit that Open TSPS is Free and Open Source Software. Cost is ever an issue, and so we are trying to be as economical as possible.

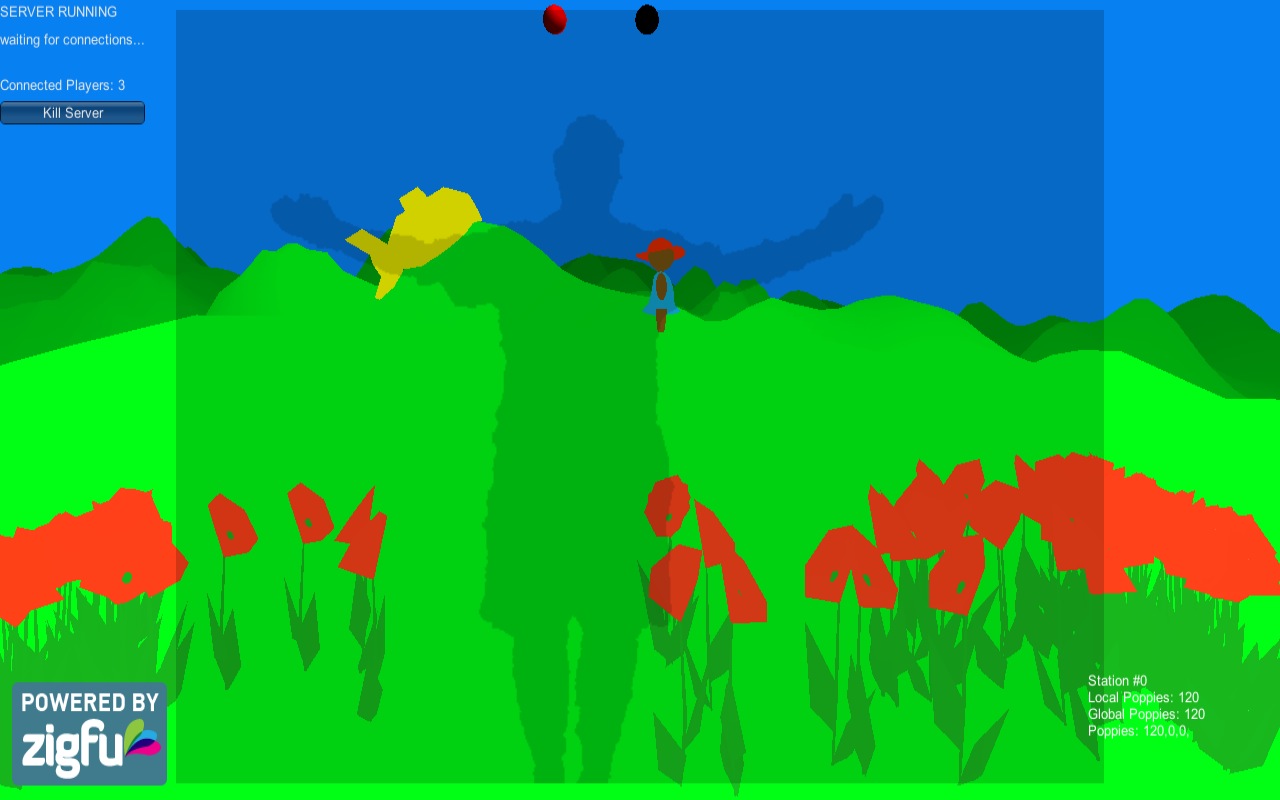

Teri has begun drafting visual models of the flowers and now of non-player characters who will appear. Even before we found the work entitled “Ghost” we had been talking about including ghostly apparitions as NPCs that would interact with the flowers sown by our players. We have wanted to continue to work in the low-resolution aesthetic tradition of SWEAT, balanced with an endearing kawaii spirit. The guiding principles are the pursuit of the simple, the beautiful, the intelligible.

I’m going to start investigating the viability of loading a Raspberry Pi with an instance of the game. If we could use Raspberry Pi + Sensor + Projector + Network as our installed technology, it would drop the overall cost significantly.