In this work we developed a wide area tracking system based on consumer hardware such as Microsoft Kinect and Asus Xtion together with available motion capture modules and middleware (OpenNI). We are using multiple depth cameras (Kinects) for human pose tracking in order to increase the captured space.

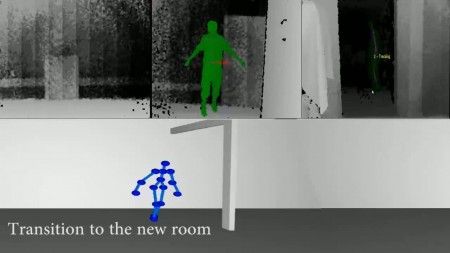

In this video you can see an installation of 3 Kinect depth cameras with overlapping regions. A user walks within the extended tracking volume and his motion is captured continuously.

At some point the position of his arms cannot be tracked flawlessly because the Kinects are looking at him sideways, therefore do not see occluded body parts. Sensor coverage from the front/back would be needed in addition to handle these cases.

Commercially available cameras can capture human movements in a non-intrusive way, while associated software-modules produce pose information of a simplified skeleton model. We calibrate the cameras relatively to each other to seamlessly combine their tracking data. Our design allows an arbitrary number of sensors to be integrated and used in parallel over a local area network. This enables us to capture human movements in a large arbitrarily shaped area. In addition we can improve motion capture data in regions, where the field of view of multiple cameras overlaps, by mutually completing partly occluded poses. In various examples we demonstrate, how human pose data is being merged in order to cover a wide area and how this data can easily be used for low cost character animation in a virtual environment.

Please find details in the full paper:

Schönauer C., Kaufmann H., “Wide Area Motion Tracking Using Consumer Hardware”

In Proceedings of Workshop on Whole Body Interaction in Games and Entertainment, Advances in Computer Entertainment Technology (ACE 2011), Lisbon, Portugal, 2011.

via Wide Area Motion Capture with Multiple Kinects ‹ TrackingReality.