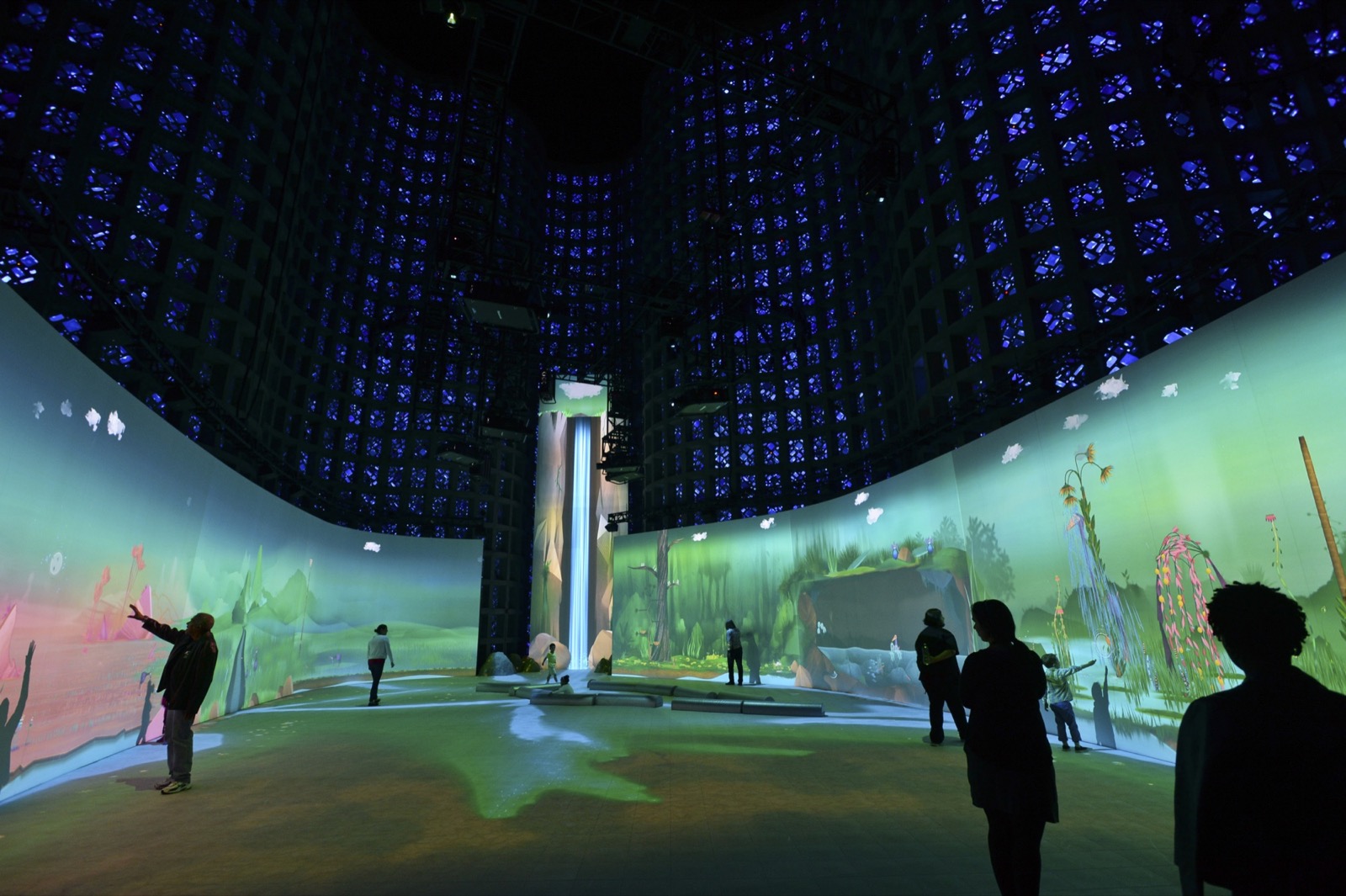

Large project with NSF funding poetically explores ecosystems at large scale. Blogged here for reference to it’s hardware and software details. Sow/Reap may benefit from their example.

Each environment in Connected Worlds runs on a separate machine with software developed with openFrameworks. There are a total of 8 Mac Pros running the whole experience. There is a total of 15 projectors used: 7 for the floor, 6 for the wall environments and 2 for the Waterfall. For the floor tracking the team are using fabric logs made out of a retro reflective fabric being tracked by three IR cameras. For the wall interactions they are doing custom hand tracking and gesture detection from above using 12 Kinects two Kinects per environment. To eliminate perspective issues from using multiple Kinects they combined their point clouds into a single space and then use an orthographic ofCamera to look at them from a single viewpoint.

The eight machines driving Connected Worlds all communicate with each other using OSC and the broadcast port of the router. This allows all the machines to exchange information without needing a server / client relationship. When creatures migrate between machines they are turned into a series of ofParameters, added to an OSC blob as a zipped binary buffer, the environment they are going to migrate to then unzips the blob and creates a duplicate creature from those ofParameters.

via Connected Worlds – Interactive ecosystem for @nysci by @design_io.