Andre has continued to research alternative middleware and hardware. He has OpenTSPS recognizing a rapid backhand gesture (think ping pong) in a way that is rock solid. He has been also looking at hardware packages, including IR cameras, that may provide us with robust alternatives to the Kinect sensors. And, he has been investigating contextual, event-driven, audio that would add MaxMSP to our software mix. This last bit to address the question “what would this space sound like?”.

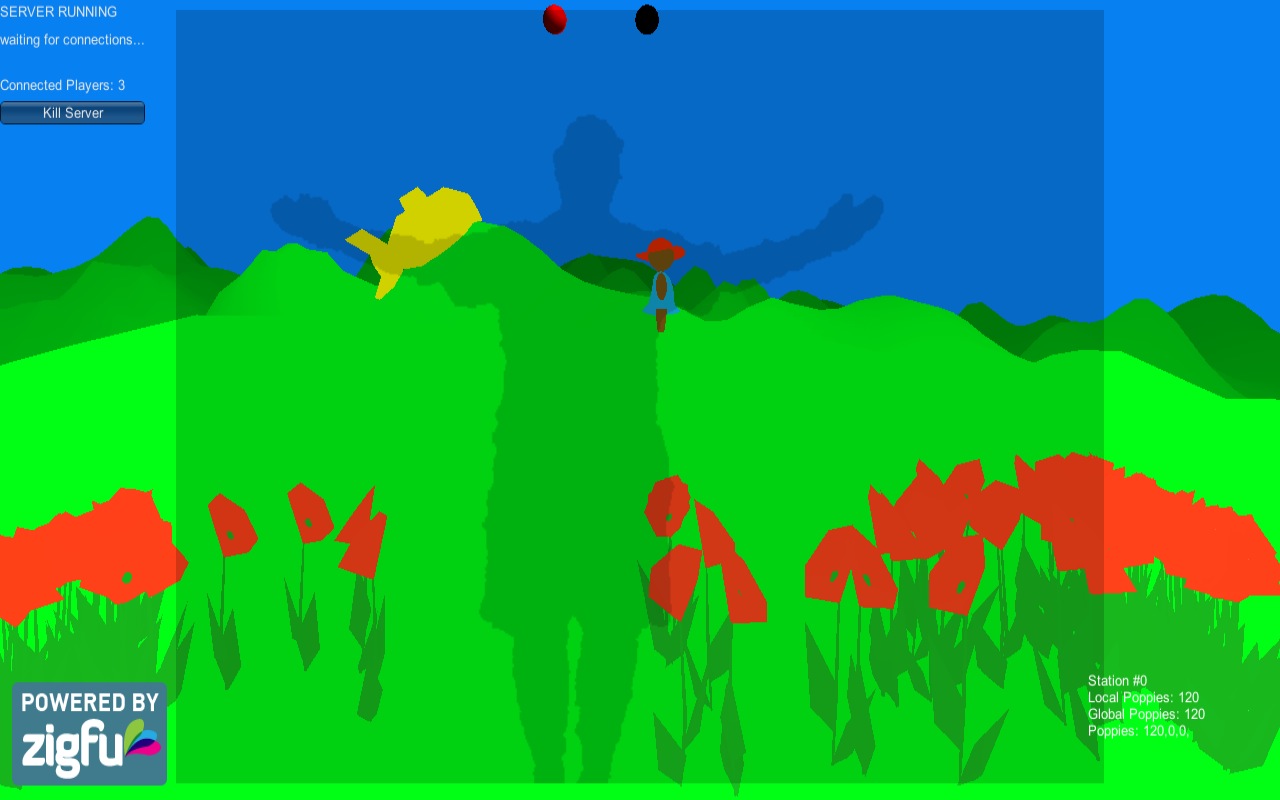

Ben, Tommy, and Esteban have continued to develop for the OpenNI, NITE2, ZigFu, Unity3D, and Kinect environment. They have been each creating Unity scripts in C# that now have to be integrated. Tommy has written scripts that will allow us to integrate weather data into the game, this to drive the wind algorithms. It is an attractive prospect to have the individual models of the poppies responsive to an in-game breeze. Tommy has also adapted the client-server scripts that will allow us to adaptively scale up the game to a number of environments. He’s made the server auto-detect client/players and auto-resolving/assigning of IP addresses. Ben has put several of the models that Teri made into the environments. We have the women farmers appearing after a certain number of flowers have been sown. The narco-submarine is also now on a hill top. Tommy, Ben and Esteban have been experimenting with the kinds of feedback given to the player. They have settled on projecting the players data shadow into the game environment, as well as a color-coded sphere that signals what the player gesture should yield. This is a nice, quiet UI.

It feels as though we may be ready for an alpha-stage playtest on wednesday.